- India

- International

Meet Emo, a humanoid robot that can mimic facial expressions and smile before you do

Researchers at Colombia University recently unveiled a new humanoid robot named 'Emo' who can mimic facial expressions in real time and even smile before you do.

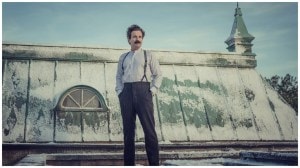

The study's lead author, Yuhang Hu, a PhD student at Columbia Engineering in Hod Lipson’s lab. (Credit: John Abbott/Columbia Engineering)

The study's lead author, Yuhang Hu, a PhD student at Columbia Engineering in Hod Lipson’s lab. (Credit: John Abbott/Columbia Engineering)AI is pretty good at mimicking humans when it comes to speech, but robots that can copy our facial expressions still seem to fall behind. However, that might soon change as researchers at Columbia University’s Creative Machines Lab have unveiled a new humanoid robot called “Emo” that can mimic facial expressions in real time.

According to a recently published study in Science Robotics claim, Emo can rotate its head while anticipating and mirroring human facial expressions and even smile back 839 milliseconds before you do.

As of now, most robots that try to mimic a person’s expressions have a noticeable delay since they are trying to imitate a person’s face in real-time. However, this often feels fake, with many people interacting with social robots for the first time expressing their disappointment over the same.

The team behind Emo says it attempted to fix this very issue. To make the new humanoid robot, a team of AI and robotic experts led by Hod Lipson worked together to create a realistic human-robot head with 26 actuators that help with realistic muscle movements and enable tiny but noticeable facial expression features. Emo’s eyes also have two high-resolution cameras that help the robot make eye-to-eye contact with real humans. The actuators and motors are covered by a blue-coloured silicone skin that gives it a realistic-looking human face.

The researchers also developed two AI models, one of which is responsible for predicting human expressions while the other one controls the actuators behind the silicone skin. These AI models were trained using sample videos of various facial expressions. with the study claiming that Emo learned even the smallest of facial muscle movements in a few hours. The humanoid robot can also predict what facial expressions a human would make in real time around 70 per cent of the time and can also raise eyebrows as well as frown.

While Emo can only interact via different facial expressions, but researchers say the humanoid robot might be able to talk using large language model systems like ChatGPT. Yuang Hu, the study’s lead author says that the current version of Emo relies solely on jaw movements and that the robot’s lips currently need some work. With rapid advancement in robots that can move like humans, we may see a version of Emo with a full body that can mimic and even help humans sometime in the future.

More Tech

May 18: Latest News

- 01

- 02

- 03

- 04

- 05