Journal Description

Big Data and Cognitive Computing

Big Data and Cognitive Computing

is an international, peer-reviewed, open access journal on big data and cognitive computing published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q1 (Management Information Systems)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18.2 days after submission; acceptance to publication is undertaken in 3.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.7 (2022)

Latest Articles

Autonomous Vehicles: Evolution of Artificial Intelligence and the Current Industry Landscape

Big Data Cogn. Comput. 2024, 8(4), 42; https://doi.org/10.3390/bdcc8040042 - 07 Apr 2024

Abstract

The advent of autonomous vehicles has heralded a transformative era in transportation, reshaping the landscape of mobility through cutting-edge technologies. Central to this evolution is the integration of artificial intelligence (AI), propelling vehicles into realms of unprecedented autonomy. Commencing with an overview of

[...] Read more.

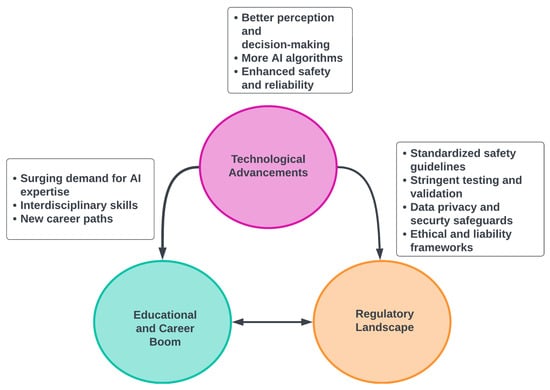

The advent of autonomous vehicles has heralded a transformative era in transportation, reshaping the landscape of mobility through cutting-edge technologies. Central to this evolution is the integration of artificial intelligence (AI), propelling vehicles into realms of unprecedented autonomy. Commencing with an overview of the current industry landscape with respect to Operational Design Domain (ODD), this paper delves into the fundamental role of AI in shaping the autonomous decision-making capabilities of vehicles. It elucidates the steps involved in the AI-powered development life cycle in vehicles, addressing various challenges such as safety, security, privacy, and ethical considerations in AI-driven software development for autonomous vehicles. The study presents statistical insights into the usage and types of AI algorithms over the years, showcasing the evolving research landscape within the automotive industry. Furthermore, the paper highlights the pivotal role of parameters in refining algorithms for both trucks and cars, facilitating vehicles to adapt, learn, and improve performance over time. It concludes by outlining different levels of autonomy, elucidating the nuanced usage of AI algorithms, and discussing the automation of key tasks and the software package size at each level. Overall, the paper provides a comprehensive analysis of the current industry landscape, focusing on several critical aspects.

Full article

(This article belongs to the Special Issue Deep Network Learning and Its Applications)

►

Show Figures

Open AccessArticle

Data Sorting Influence on Short Text Manual Labeling Quality for Hierarchical Classification

by

Olga Narushynska, Vasyl Teslyuk, Anastasiya Doroshenko and Maksym Arzubov

Big Data Cogn. Comput. 2024, 8(4), 41; https://doi.org/10.3390/bdcc8040041 - 07 Apr 2024

Abstract

The precise categorization of brief texts holds significant importance in various applications within the ever-changing realm of artificial intelligence (AI) and natural language processing (NLP). Short texts are everywhere in the digital world, from social media updates to customer reviews and feedback. Nevertheless,

[...] Read more.

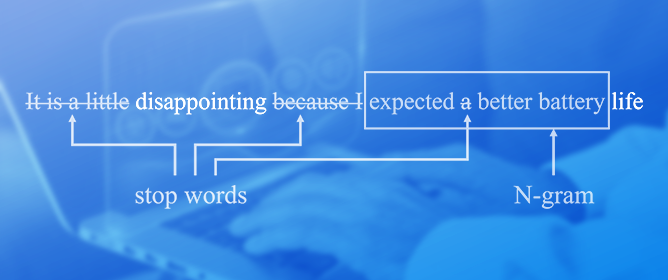

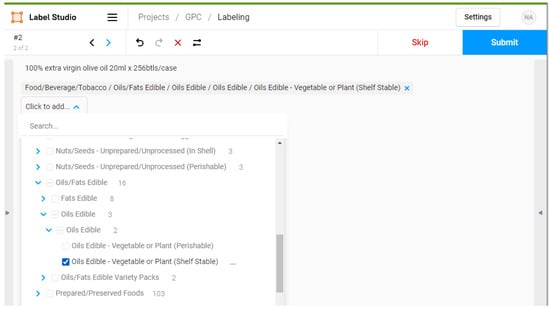

The precise categorization of brief texts holds significant importance in various applications within the ever-changing realm of artificial intelligence (AI) and natural language processing (NLP). Short texts are everywhere in the digital world, from social media updates to customer reviews and feedback. Nevertheless, short texts’ limited length and context pose unique challenges for accurate classification. This research article delves into the influence of data sorting methods on the quality of manual labeling in hierarchical classification, with a particular focus on short texts. The study is set against the backdrop of the increasing reliance on manual labeling in AI and NLP, highlighting its significance in the accuracy of hierarchical text classification. Methodologically, the study integrates AI, notably zero-shot learning, with human annotation processes to examine the efficacy of various data-sorting strategies. The results demonstrate how different sorting approaches impact the accuracy and consistency of manual labeling, a critical aspect of creating high-quality datasets for NLP applications. The study’s findings reveal a significant time efficiency improvement in terms of labeling, where ordered manual labeling required 760 min per 1000 samples, compared to 800 min for traditional manual labeling, illustrating the practical benefits of optimized data sorting strategies. Comparatively, ordered manual labeling achieved the highest mean accuracy rates across all hierarchical levels, with figures reaching up to 99% for segments, 95% for families, 92% for classes, and 90% for bricks, underscoring the efficiency of structured data sorting. It offers valuable insights and practical guidelines for improving labeling quality in hierarchical classification tasks, thereby advancing the precision of text analysis in AI-driven research. This abstract encapsulates the article’s background, methods, results, and conclusions, providing a comprehensive yet succinct study overview.

Full article

(This article belongs to the Special Issue Natural Language Processing and Event Extraction for Big Data)

►▼

Show Figures

Figure 1

Open AccessArticle

Generating Synthetic Sperm Whale Voice Data Using StyleGAN2-ADA

by

Ekaterina Kopets, Tatiana Shpilevaya, Oleg Vasilchenko, Artur Karimov and Denis Butusov

Big Data Cogn. Comput. 2024, 8(4), 40; https://doi.org/10.3390/bdcc8040040 - 03 Apr 2024

Abstract

►▼

Show Figures

The application of deep learning neural networks enables the processing of extensive volumes of data and often requires dense datasets. In certain domains, researchers encounter challenges related to the scarcity of training data, particularly in marine biology. In addition, many sounds produced by

[...] Read more.

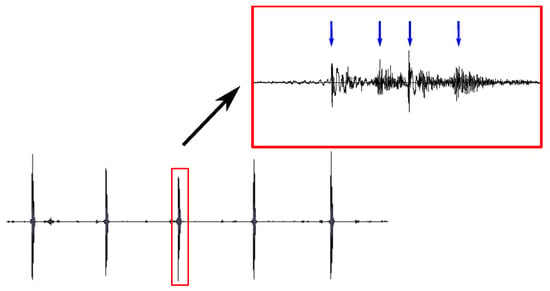

The application of deep learning neural networks enables the processing of extensive volumes of data and often requires dense datasets. In certain domains, researchers encounter challenges related to the scarcity of training data, particularly in marine biology. In addition, many sounds produced by sea mammals are of interest in technical applications, e.g., underwater communication or sonar construction. Thus, generating synthetic biological sounds is an important task for understanding and studying the behavior of various animal species, especially large sea mammals, which demonstrate complex social behavior and can use hydrolocation to navigate underwater. This study is devoted to generating sperm whale vocalizations using a limited sperm whale click dataset. Our approach utilizes an augmentation technique predicated on the transformation of audio sample spectrograms, followed by the employment of the generative adversarial network StyleGAN2-ADA to generate new audio data. The results show that using the chosen augmentation method, namely mixing along the time axis, makes it possible to create fairly similar clicks of sperm whales with a maximum deviation of 2%. The generation of new clicks was reproduced on datasets using selected augmentation approaches with two neural networks: StyleGAN2-ADA and WaveGan. StyleGAN2-ADA, trained on an augmented dataset using the axis mixing approach, showed better results compared to WaveGAN.

Full article

Figure 1

Open AccessArticle

Automating Feature Extraction from Entity-Relation Models: Experimental Evaluation of Machine Learning Methods for Relational Learning

by

Boris Stanoev, Goran Mitrov, Andrea Kulakov, Georgina Mirceva, Petre Lameski and Eftim Zdravevski

Big Data Cogn. Comput. 2024, 8(4), 39; https://doi.org/10.3390/bdcc8040039 - 01 Apr 2024

Abstract

With the exponential growth of data, extracting actionable insights becomes resource-intensive. In many organizations, normalized relational databases store a significant portion of this data, where tables are interconnected through some relations. This paper explores relational learning, which involves joining and merging database tables,

[...] Read more.

With the exponential growth of data, extracting actionable insights becomes resource-intensive. In many organizations, normalized relational databases store a significant portion of this data, where tables are interconnected through some relations. This paper explores relational learning, which involves joining and merging database tables, often normalized in the third normal form. The subsequent processing includes extracting features and utilizing them in machine learning (ML) models. In this paper, we experiment with the propositionalization algorithm (i.e., Wordification) for feature engineering. Next, we compare the algorithms PropDRM and PropStar, which are designed explicitly for multi-relational data mining, to traditional machine learning algorithms. Based on the performed experiments, we concluded that Gradient Boost, compared to PropDRM, achieves similar performance (F1 score, accuracy, and AUC) on multiple datasets. PropStar consistently underperformed on some datasets while being comparable to the other algorithms on others. In summary, the propositionalization algorithm for feature extraction makes it feasible to apply traditional ML algorithms for relational learning directly. In contrast, approaches tailored specifically for relational learning still face challenges in scalability, interpretability, and efficiency. These findings have a practical impact that can help speed up the adoption of machine learning in business contexts where data is stored in relational format without requiring domain-specific feature extraction.

Full article

(This article belongs to the Special Issue Machine Learning in Data Mining for Knowledge Discovery)

►▼

Show Figures

Figure 1

Open AccessArticle

Comparing Hierarchical Approaches to Enhance Supervised Emotive Text Classification

by

Lowri Williams, Eirini Anthi and Pete Burnap

Big Data Cogn. Comput. 2024, 8(4), 38; https://doi.org/10.3390/bdcc8040038 - 29 Mar 2024

Abstract

The performance of emotive text classification using affective hierarchical schemes (e.g., WordNet-Affect) is often evaluated using the same traditional measures used to evaluate the performance of when a finite set of isolated classes are used. However, applying such measures means the full characteristics

[...] Read more.

The performance of emotive text classification using affective hierarchical schemes (e.g., WordNet-Affect) is often evaluated using the same traditional measures used to evaluate the performance of when a finite set of isolated classes are used. However, applying such measures means the full characteristics and structure of the emotive hierarchical scheme are not considered. Thus, the overall performance of emotive text classification using emotion hierarchical schemes is often inaccurately reported and may lead to ineffective information retrieval and decision making. This paper provides a comparative investigation into how methods used in hierarchical classification problems in other domains, which extend traditional evaluation metrics to consider the characteristics of the hierarchical classification scheme, can be applied and subsequently improve the classification of emotive texts. This study investigates the classification performance of three widely used classifiers, Naive Bayes, J48 Decision Tree, and SVM, following the application of the aforementioned methods. The results demonstrated that all the methods improved the emotion classification. However, the most notable improvement was recorded when a depth-based method was applied to both the testing and validation data, where the precision, recall, and F1-score were significantly improved by around 70 percentage points for each classifier.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

Cybercrime Risk Found in Employee Behavior Big Data Using Semi-Supervised Machine Learning with Personality Theories

by

Kenneth David Strang

Big Data Cogn. Comput. 2024, 8(4), 37; https://doi.org/10.3390/bdcc8040037 - 29 Mar 2024

Abstract

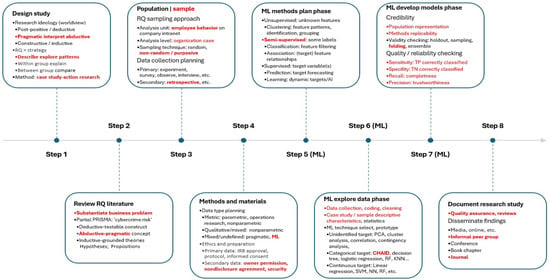

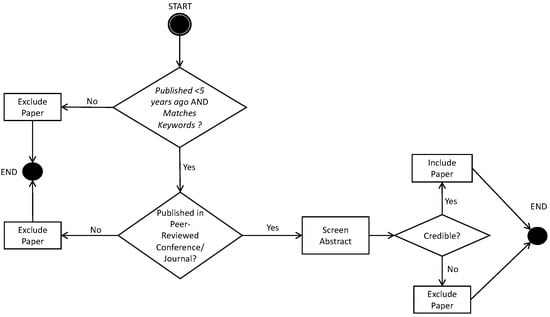

A critical worldwide problem is that ransomware cyberattacks can be costly to organizations. Moreover, accidental employee cybercrime risk can be challenging to prevent, even by leveraging advanced computer science techniques. This exploratory project used a novel cognitive computing design with detailed explanations of

[...] Read more.

A critical worldwide problem is that ransomware cyberattacks can be costly to organizations. Moreover, accidental employee cybercrime risk can be challenging to prevent, even by leveraging advanced computer science techniques. This exploratory project used a novel cognitive computing design with detailed explanations of the action-research case-study methodology and customized machine learning (ML) techniques, supplemented by a workflow diagram. The ML techniques included language preprocessing, normalization, tokenization, keyword association analytics, learning tree analysis, credibility/reliability/validity checks, heatmaps, and scatter plots. The author analyzed over 8 GB of employee behavior big data from a multinational Fintech company global intranet. The five-factor personality theory (FFPT) from the psychology discipline was integrated into semi-supervised ML to classify retrospective employee behavior and then identify cybercrime risk. Higher levels of employee neuroticism were associated with a greater organizational cybercrime risk, corroborating the findings in empirical publications. In stark contrast to the literature, an openness to new experiences was inversely related to cybercrime risk. The other FFPT factors, conscientiousness, agreeableness, and extroversion, had no informative association with cybercrime risk. This study introduced an interdisciplinary paradigm shift for big data cognitive computing by illustrating how to integrate a proven scientific construct into ML—personality theory from the psychology discipline—to analyze human behavior using a retrospective big data collection approach that was asserted to be more efficient, reliable, and valid as compared to traditional methods like surveys or interviews.

Full article

(This article belongs to the Special Issue Applied Data Science for Social Good)

►▼

Show Figures

Figure 1

Open AccessReview

From Traditional Recommender Systems to GPT-Based Chatbots: A Survey of Recent Developments and Future Directions

by

Tamim Mahmud Al-Hasan, Aya Nabil Sayed, Faycal Bensaali, Yassine Himeur, Iraklis Varlamis and George Dimitrakopoulos

Big Data Cogn. Comput. 2024, 8(4), 36; https://doi.org/10.3390/bdcc8040036 - 27 Mar 2024

Abstract

Recommender systems are a key technology for many applications, such as e-commerce, streaming media, and social media. Traditional recommender systems rely on collaborative filtering or content-based filtering to make recommendations. However, these approaches have limitations, such as the cold start and the data

[...] Read more.

Recommender systems are a key technology for many applications, such as e-commerce, streaming media, and social media. Traditional recommender systems rely on collaborative filtering or content-based filtering to make recommendations. However, these approaches have limitations, such as the cold start and the data sparsity problem. This survey paper presents an in-depth analysis of the paradigm shift from conventional recommender systems to generative pre-trained-transformers-(GPT)-based chatbots. We highlight recent developments that leverage the power of GPT to create interactive and personalized conversational agents. By exploring natural language processing (NLP) and deep learning techniques, we investigate how GPT models can better understand user preferences and provide context-aware recommendations. The paper further evaluates the advantages and limitations of GPT-based recommender systems, comparing their performance with traditional methods. Additionally, we discuss potential future directions, including the role of reinforcement learning in refining the personalization aspect of these systems.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Natural Language Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Two-Stage Method for Clothing Feature Detection

by

Xinwei Lyu, Xinjia Li, Yuexin Zhang and Wenlian Lu

Big Data Cogn. Comput. 2024, 8(4), 35; https://doi.org/10.3390/bdcc8040035 - 26 Mar 2024

Abstract

►▼

Show Figures

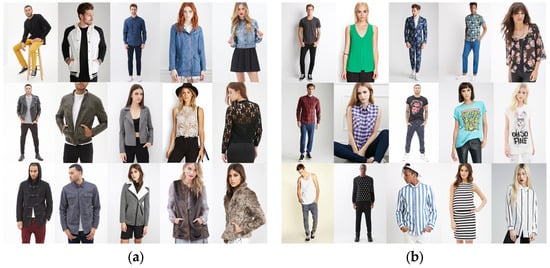

The rapid expansion of e-commerce, particularly in the clothing sector, has led to a significant demand for an effective clothing industry. This study presents a novel two-stage image recognition method. Our approach distinctively combines human keypoint detection, object detection, and classification methods into

[...] Read more.

The rapid expansion of e-commerce, particularly in the clothing sector, has led to a significant demand for an effective clothing industry. This study presents a novel two-stage image recognition method. Our approach distinctively combines human keypoint detection, object detection, and classification methods into a two-stage structure. Initially, we utilize open-source libraries, namely OpenPose and Dlib, for accurate human keypoint detection, followed by a custom cropping logic for extracting body part boxes. In the second stage, we employ a blend of Harris Corner, Canny Edge, and skin pixel detection integrated with VGG16 and support vector machine (SVM) models. This configuration allows the bounding boxes to identify ten unique attributes, encompassing facial features and detailed aspects of clothing. Conclusively, the experiment yielded an overall recognition accuracy of 81.4% for tops and 85.72% for bottoms, highlighting the efficacy of the applied methodologies in garment categorization.

Full article

Figure 1

Open AccessArticle

A Comparative Study for Stock Market Forecast Based on a New Machine Learning Model

by

Enrique González-Núñez, Luis A. Trejo and Michael Kampouridis

Big Data Cogn. Comput. 2024, 8(4), 34; https://doi.org/10.3390/bdcc8040034 - 26 Mar 2024

Abstract

►▼

Show Figures

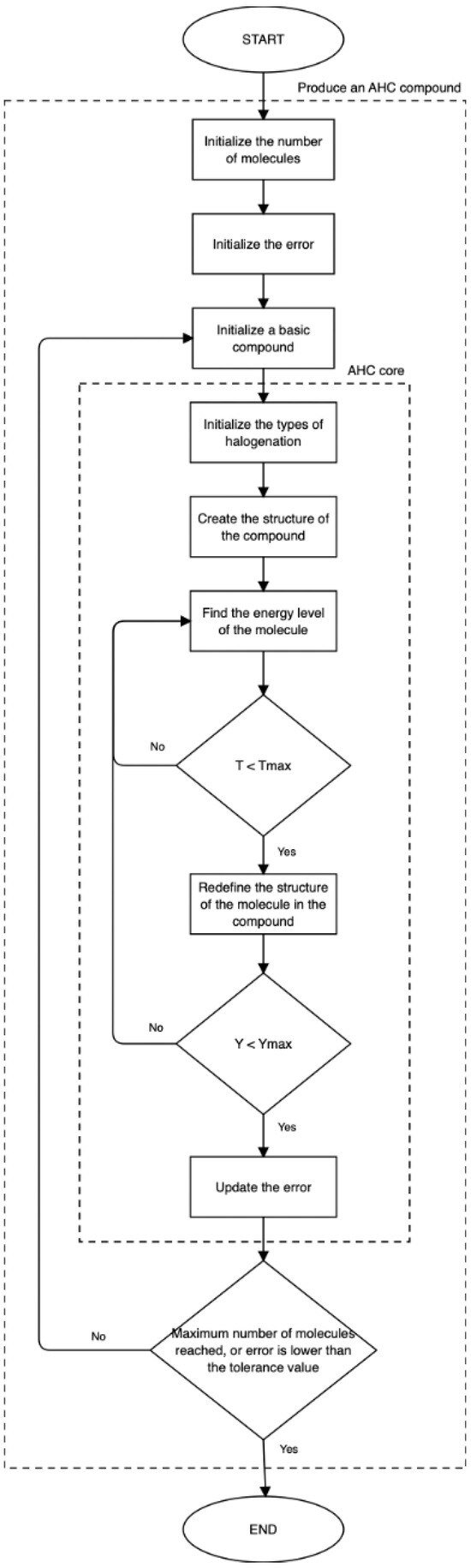

This research aims at applying the Artificial Organic Network (AON), a nature-inspired, supervised, metaheuristic machine learning framework, to develop a new algorithm based on this machine learning class. The focus of the new algorithm is to model and predict stock markets based on

[...] Read more.

This research aims at applying the Artificial Organic Network (AON), a nature-inspired, supervised, metaheuristic machine learning framework, to develop a new algorithm based on this machine learning class. The focus of the new algorithm is to model and predict stock markets based on the Index Tracking Problem (ITP). In this work, we present a new algorithm, based on the AON framework, that we call Artificial Halocarbon Compounds, or the AHC algorithm for short. In this study, we compare the AHC algorithm against genetic algorithms (GAs), by forecasting eight stock market indices. Additionally, we performed a cross-reference comparison against results regarding the forecast of other stock market indices based on state-of-the-art machine learning methods. The efficacy of the AHC model is evaluated by modeling each index, producing highly promising results. For instance, in the case of the IPC Mexico index, the R-square is 0.9806, with a mean relative error of

Figure 1

Open AccessArticle

Cancer Detection Using a New Hybrid Method Based on Pattern Recognition in MicroRNAs Combining Particle Swarm Optimization Algorithm and Artificial Neural Network

by

Sepideh Molaei, Stefano Cirillo and Giandomenico Solimando

Big Data Cogn. Comput. 2024, 8(3), 33; https://doi.org/10.3390/bdcc8030033 - 19 Mar 2024

Abstract

MicroRNAs (miRNAs) play a crucial role in cancer development, but not all miRNAs are equally significant in cancer detection. Traditional methods face challenges in effectively identifying cancer-associated miRNAs due to data complexity and volume. This study introduces a novel, feature-based technique for detecting

[...] Read more.

MicroRNAs (miRNAs) play a crucial role in cancer development, but not all miRNAs are equally significant in cancer detection. Traditional methods face challenges in effectively identifying cancer-associated miRNAs due to data complexity and volume. This study introduces a novel, feature-based technique for detecting attributes related to cancer-affecting microRNAs. It aims to enhance cancer diagnosis accuracy by identifying the most relevant miRNAs for various cancer types using a hybrid approach. In particular, we used a combination of particle swarm optimization (PSO) and artificial neural networks (ANNs) for this purpose. PSO was employed for feature selection, focusing on identifying the most informative miRNAs, while ANNs were used for recognizing patterns within the miRNA data. This hybrid method aims to overcome limitations in traditional miRNA analysis by reducing data redundancy and focusing on key genetic markers. The application of this method showed a significant improvement in the detection accuracy for various cancers, including breast and lung cancer and melanoma. Our approach demonstrated a higher precision in identifying relevant miRNAs compared to existing methods, as evidenced by the analysis of different datasets. The study concludes that the integration of PSO and ANNs provides a more efficient, cost-effective, and accurate method for cancer detection via miRNA analysis. This method can serve as a supplementary tool for cancer diagnosis and potentially aid in developing personalized cancer treatments.

Full article

(This article belongs to the Special Issue Big Data and Information Science Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

AI-Generated Text Detector for Arabic Language Using Encoder-Based Transformer Architecture

by

Hamed Alshammari, Ahmed El-Sayed and Khaled Elleithy

Big Data Cogn. Comput. 2024, 8(3), 32; https://doi.org/10.3390/bdcc8030032 - 18 Mar 2024

Abstract

►▼

Show Figures

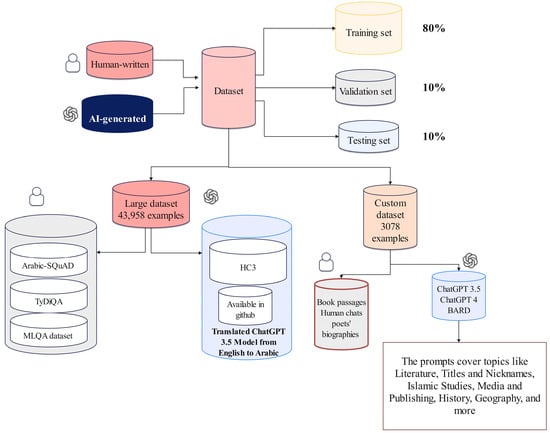

The effectiveness of existing AI detectors is notably hampered when processing Arabic texts. This study introduces a novel AI text classifier designed specifically for Arabic, tackling the distinct challenges inherent in processing this language. A particular focus is placed on accurately recognizing human-written

[...] Read more.

The effectiveness of existing AI detectors is notably hampered when processing Arabic texts. This study introduces a novel AI text classifier designed specifically for Arabic, tackling the distinct challenges inherent in processing this language. A particular focus is placed on accurately recognizing human-written texts (HWTs), an area where existing AI detectors have demonstrated significant limitations. To achieve this goal, this paper utilized and fine-tuned two Transformer-based models, AraELECTRA and XLM-R, by training them on two distinct datasets: a large dataset comprising 43,958 examples and a custom dataset with 3078 examples that contain HWT and AI-generated texts (AIGTs) from various sources, including ChatGPT 3.5, ChatGPT-4, and BARD. The proposed architecture is adaptable to any language, but this work evaluates these models’ efficiency in recognizing HWTs versus AIGTs in Arabic as an example of Semitic languages. The performance of the proposed models has been compared against the two prominent existing AI detectors, GPTZero and OpenAI Text Classifier, particularly on the AIRABIC benchmark dataset. The results reveal that the proposed classifiers outperform both GPTZero and OpenAI Text Classifier with 81% accuracy compared to 63% and 50% for GPTZero and OpenAI Text Classifier, respectively. Furthermore, integrating a Dediacritization Layer prior to the classification model demonstrated a significant enhancement in the detection accuracy of both HWTs and AIGTs. This Dediacritization step markedly improved the classification accuracy, elevating it from 81% to as high as 99% and, in some instances, even achieving 100%.

Full article

Figure 1

Open AccessArticle

Machine Learning Approaches for Predicting Risk of Cardiometabolic Disease among University Students

by

Dhiaa Musleh, Ali Alkhwaja, Ibrahim Alkhwaja, Mohammed Alghamdi, Hussam Abahussain, Mohammed Albugami, Faisal Alfawaz, Said El-Ashker and Mohammed Al-Hariri

Big Data Cogn. Comput. 2024, 8(3), 31; https://doi.org/10.3390/bdcc8030031 - 13 Mar 2024

Abstract

Obesity is increasingly becoming a prevalent health concern among adolescents, leading to significant risks like cardiometabolic diseases (CMDs). The early discovery and diagnosis of CMD is essential for better outcomes. This study aims to build a reliable artificial intelligence model that can predict

[...] Read more.

Obesity is increasingly becoming a prevalent health concern among adolescents, leading to significant risks like cardiometabolic diseases (CMDs). The early discovery and diagnosis of CMD is essential for better outcomes. This study aims to build a reliable artificial intelligence model that can predict CMD using various machine learning techniques. Support vector machines (SVMs), K-Nearest neighbor (KNN), Logistic Regression (LR), Random Forest (RF), and Gradient Boosting are five robust classifiers that are compared in this study. A novel “risk level” feature, derived through fuzzy logic applied to the Conicity Index, as a novel feature, which was previously unused, is introduced to enhance the interpretability and discriminatory properties of the proposed models. As the Conicity Index scores indicate CMD risk, two separate models are developed to address each gender individually. The performance of the proposed models is assessed using two datasets obtained from 295 records of undergraduate students in Saudi Arabia. The dataset comprises 121 male and 174 female students with diverse risk levels. Notably, Logistic Regression emerges as the top performer among males, achieving an accuracy score of 91%, while Gradient Boosting lags with a score of 72%. Among females, both Support Vector Machine and Logistic Regression lead with an accuracy score of 87%, while Random Forest performs least optimally with a score of 80%.

Full article

(This article belongs to the Special Issue Revolutionizing Healthcare: Exploring the Latest Advances in Digital Health Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

Proposal of a Service Model for Blockchain-Based Security Tokens

by

Keundug Park and Heung-Youl Youm

Big Data Cogn. Comput. 2024, 8(3), 30; https://doi.org/10.3390/bdcc8030030 - 12 Mar 2024

Abstract

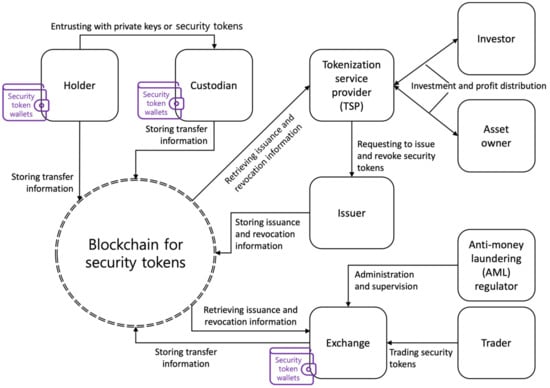

The volume of the asset investment and trading market can be expanded through the issuance and management of blockchain-based security tokens that logically divide the value of assets and guarantee ownership. This paper proposes a service model to solve a problem with the

[...] Read more.

The volume of the asset investment and trading market can be expanded through the issuance and management of blockchain-based security tokens that logically divide the value of assets and guarantee ownership. This paper proposes a service model to solve a problem with the existing investment service model, identifies security threats to the service model, and specifies security requirements countering the identified security threats for privacy protection and anti-money laundering (AML) involving security tokens. The identified security threats and specified security requirements should be taken into consideration when implementing the proposed service model. The proposed service model allows users to invest in tokenized tangible and intangible assets and trade in blockchain-based security tokens. This paper discusses considerations to prevent excessive regulation and market monopoly in the issuance of and trading in security tokens when implementing the proposed service model and concludes with future works.

Full article

(This article belongs to the Special Issue Blockchain Meets IoT for Big Data)

►▼

Show Figures

Figure 1

Open AccessArticle

The Distribution and Accessibility of Elements of Tourism in Historic and Cultural Cities

by

Wei-Ling Hsu, Yi-Jheng Chang, Lin Mou, Juan-Wen Huang and Hsin-Lung Liu

Big Data Cogn. Comput. 2024, 8(3), 29; https://doi.org/10.3390/bdcc8030029 - 11 Mar 2024

Abstract

Historic urban areas are the foundations of urban development. Due to rapid urbanization, the sustainable development of historic urban areas has become challenging for many cities. Elements of tourism and tourism service facilities play an important role in the sustainable development of historic

[...] Read more.

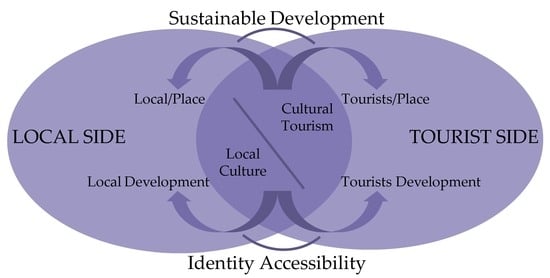

Historic urban areas are the foundations of urban development. Due to rapid urbanization, the sustainable development of historic urban areas has become challenging for many cities. Elements of tourism and tourism service facilities play an important role in the sustainable development of historic areas. This study analyzed policies related to tourism in Panguifang and Meixian districts in Meizhou, Guangdong, China. Kernel density estimation was used to study the clustering characteristics of tourism elements through point of interest (POI) data, while space syntax was used to study the accessibility of roads. In addition, the Pearson correlation coefficient and regression were used to analyze the correlation between the elements and accessibility. The results show the following: (1) the overall number of tourism elements was high on the western side of the districts and low on the eastern one, and the elements were predominantly distributed along the main transportation arteries; (2) according to the integration degree and depth value, the western side was easier to access than the eastern one; and (3) the depth value of the area negatively correlated with kernel density, while the degree of integration positively correlated with it. Based on the results, the study put forward measures for optimizing the elements of tourism in Meizhou’s historic urban area to improve cultural tourism and emphasize the importance of the elements.

Full article

(This article belongs to the Special Issue Big Data Analytics for Cultural Heritage 2nd Edition)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Enhancing Supervised Model Performance in Credit Risk Classification Using Sampling Strategies and Feature Ranking

by

Niwan Wattanakitrungroj, Pimchanok Wijitkajee, Saichon Jaiyen, Sunisa Sathapornvajana and Sasiporn Tongman

Big Data Cogn. Comput. 2024, 8(3), 28; https://doi.org/10.3390/bdcc8030028 - 06 Mar 2024

Abstract

For the financial health of lenders and institutions, one important risk assessment called credit risk is about correctly deciding whether or not a borrower will fail to repay a loan. It not only helps in the approval or denial of loan applications but

[...] Read more.

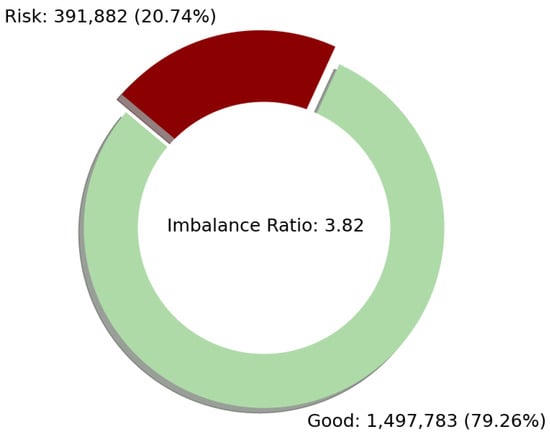

For the financial health of lenders and institutions, one important risk assessment called credit risk is about correctly deciding whether or not a borrower will fail to repay a loan. It not only helps in the approval or denial of loan applications but also aids in managing the non-performing loan (NPL) trend. In this study, a dataset provided by the LendingClub company based in San Francisco, CA, USA, from 2007 to 2020 consisting of 2,925,492 records and 141 attributes was experimented with. The loan status was categorized as “Good” or “Risk”. To yield highly effective results of credit risk prediction, experiments on credit risk prediction were performed using three widely adopted supervised machine learning techniques: logistic regression, random forest, and gradient boosting. In addition, to solve the imbalanced data problem, three sampling algorithms, including under-sampling, over-sampling, and combined sampling, were employed. The results show that the gradient boosting technique achieves nearly perfect

(This article belongs to the Topic Big Data and Artificial Intelligence, 2nd Volume)

►▼

Show Figures

Figure 1

Open AccessArticle

Temporal Dynamics of Citizen-Reported Urban Challenges: A Comprehensive Time Series Analysis

by

Andreas F. Gkontzis, Sotiris Kotsiantis, Georgios Feretzakis and Vassilios S. Verykios

Big Data Cogn. Comput. 2024, 8(3), 27; https://doi.org/10.3390/bdcc8030027 - 04 Mar 2024

Abstract

In an epoch characterized by the swift pace of digitalization and urbanization, the essence of community well-being hinges on the efficacy of urban management. As cities burgeon and transform, the need for astute strategies to navigate the complexities of urban life becomes increasingly

[...] Read more.

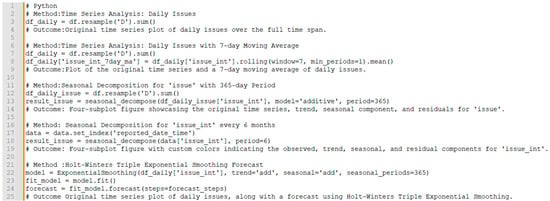

In an epoch characterized by the swift pace of digitalization and urbanization, the essence of community well-being hinges on the efficacy of urban management. As cities burgeon and transform, the need for astute strategies to navigate the complexities of urban life becomes increasingly paramount. This study employs time series analysis to scrutinize citizen interactions with the coordinate-based problem mapping platform in the Municipality of Patras in Greece. The research explores the temporal dynamics of reported urban issues, with a specific focus on identifying recurring patterns through the lens of seasonality. The analysis, employing the seasonal decomposition technique, dissects time series data to expose trends in reported issues and areas of the city that might be obscured in raw big data. It accentuates a distinct seasonal pattern, with concentrations peaking during the summer months. The study extends its approach to forecasting, providing insights into the anticipated evolution of urban issues over time. Projections for the coming years show a consistent upward trend in both overall city issues and those reported in specific areas, with distinct seasonal variations. This comprehensive exploration of time series analysis and seasonality provides valuable insights for city stakeholders, enabling informed decision-making and predictions regarding future urban challenges.

Full article

(This article belongs to the Special Issue Big Data and Information Science Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

Democratic Erosion of Data-Opolies: Decentralized Web3 Technological Paradigm Shift Amidst AI Disruption

by

Igor Calzada

Big Data Cogn. Comput. 2024, 8(3), 26; https://doi.org/10.3390/bdcc8030026 - 26 Feb 2024

Abstract

This article investigates the intricate dynamics of data monopolies, referred to as “data-opolies”, and their implications for democratic erosion. Data-opolies, typically embodied by large technology corporations, accumulate extensive datasets, affording them significant influence. The sustainability of such data practices is critically examined within

[...] Read more.

This article investigates the intricate dynamics of data monopolies, referred to as “data-opolies”, and their implications for democratic erosion. Data-opolies, typically embodied by large technology corporations, accumulate extensive datasets, affording them significant influence. The sustainability of such data practices is critically examined within the context of decentralized Web3 technologies amidst Artificial Intelligence (AI) disruption. Additionally, the article explores emancipatory datafication strategies to counterbalance the dominance of data-opolies. It presents an in-depth analysis of two emergent phenomena within the decentralized Web3 emerging landscape: People-Centered Smart Cities and Datafied Network States. The article investigates a paradigm shift in data governance and advocates for joint efforts to establish equitable data ecosystems, with an emphasis on prioritizing data sovereignty and achieving digital self-governance. It elucidates the remarkable roles of (i) blockchain, (ii) decentralized autonomous organizations (DAOs), and (iii) data cooperatives in empowering citizens to have control over their personal data. In conclusion, the article introduces a forward-looking examination of Web3 decentralized technologies, outlining a timely path toward a more transparent, inclusive, and emancipatory data-driven democracy. This approach challenges the prevailing dominance of data-opolies and offers a framework for regenerating datafied democracies through decentralized and emerging Web3 technologies.

Full article

Open AccessArticle

Sign-to-Text Translation from Panamanian Sign Language to Spanish in Continuous Capture Mode with Deep Neural Networks

by

Alvaro A. Teran-Quezada, Victor Lopez-Cabrera, Jose Carlos Rangel and Javier E. Sanchez-Galan

Big Data Cogn. Comput. 2024, 8(3), 25; https://doi.org/10.3390/bdcc8030025 - 26 Feb 2024

Abstract

Convolutional neural networks (CNN) have provided great advances for the task of sign language recognition (SLR). However, recurrent neural networks (RNN) in the form of long–short-term memory (LSTM) have become a means for providing solutions to problems involving sequential data. This research proposes

[...] Read more.

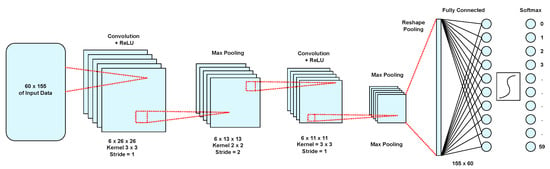

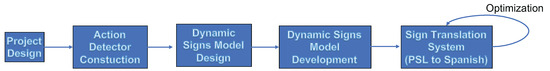

Convolutional neural networks (CNN) have provided great advances for the task of sign language recognition (SLR). However, recurrent neural networks (RNN) in the form of long–short-term memory (LSTM) have become a means for providing solutions to problems involving sequential data. This research proposes the development of a sign language translation system that converts Panamanian Sign Language (PSL) signs into text in Spanish using an LSTM model that, among many things, makes it possible to work with non-static signs (as sequential data). The deep learning model presented focuses on action detection, in this case, the execution of the signs. This involves processing in a precise manner the frames in which a sign language gesture is made. The proposal is a holistic solution that considers, in addition to the seeking of the hands of the speaker, the face and pose determinants. These were added due to the fact that when communicating through sign languages, other visual characteristics matter beyond hand gestures. For the training of this system, a data set of 330 videos (of 30 frames each) for five possible classes (different signs considered) was created. The model was tested having an accuracy of 98.8%, making this a valuable base system for effective communication between PSL users and Spanish speakers. In conclusion, this work provides an improvement of the state of the art for PSL–Spanish translation by using the possibilities of translatable signs via deep learning.

Full article

(This article belongs to the Special Issue Advances and Applications of Deep Learning Methods and Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Experimental Evaluation: Can Humans Recognise Social Media Bots?

by

Maxim Kolomeets, Olga Tushkanova, Vasily Desnitsky, Lidia Vitkova and Andrey Chechulin

Big Data Cogn. Comput. 2024, 8(3), 24; https://doi.org/10.3390/bdcc8030024 - 26 Feb 2024

Abstract

This paper aims to test the hypothesis that the quality of social media bot detection systems based on supervised machine learning may not be as accurate as researchers claim, given that bots have become increasingly sophisticated, making it difficult for human annotators to

[...] Read more.

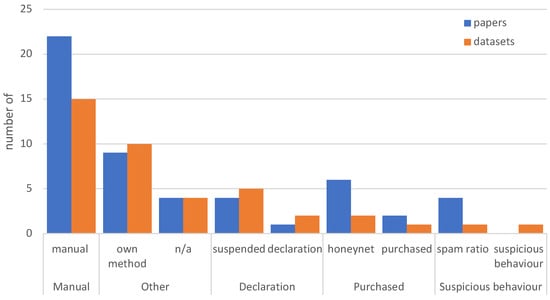

This paper aims to test the hypothesis that the quality of social media bot detection systems based on supervised machine learning may not be as accurate as researchers claim, given that bots have become increasingly sophisticated, making it difficult for human annotators to detect them better than random selection. As a result, obtaining a ground-truth dataset with human annotation is not possible, which leads to supervised machine-learning models inheriting annotation errors. To test this hypothesis, we conducted an experiment where humans were tasked with recognizing malicious bots on the VKontakte social network. We then compared the “human” answers with the “ground-truth” bot labels (‘a bot’/‘not a bot’). Based on the experiment, we evaluated the bot detection efficiency of annotators in three scenarios typical for cybersecurity but differing in their detection difficulty as follows: (1) detection among random accounts, (2) detection among accounts of a social network ‘community’, and (3) detection among verified accounts. The study showed that humans could only detect simple bots in all three scenarios but could not detect more sophisticated ones (p-value = 0.05). The study also evaluates the limits of hypothetical and existing bot detection systems that leverage non-expert-labelled datasets as follows: the balanced accuracy of such systems can drop to 0.5 and lower, depending on bot complexity and detection scenario. The paper also describes the experiment design, collected datasets, statistical evaluation, and machine learning accuracy measures applied to support the results. In the discussion, we raise the question of using human labelling in bot detection systems and its potential cybersecurity issues. We also provide open access to the datasets used, experiment results, and software code for evaluating statistical and machine learning accuracy metrics used in this paper on GitHub.

Full article

(This article belongs to the Special Issue Security, Privacy, and Trust in Artificial Intelligence Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Solar and Wind Data Recognition: Fourier Regression for Robust Recovery

by

Abdullah F. Al-Aboosi, Aldo Jonathan Muñoz Vazquez, Fadhil Y. Al-Aboosi, Mahmoud El-Halwagi and Wei Zhan

Big Data Cogn. Comput. 2024, 8(3), 23; https://doi.org/10.3390/bdcc8030023 - 24 Feb 2024

Abstract

►▼

Show Figures

Accurate prediction of renewable energy output is essential for integrating sustainable energy sources into the grid, facilitating a transition towards a more resilient energy infrastructure. Novel applications of machine learning and artificial intelligence are being leveraged to enhance forecasting methodologies, enabling more accurate

[...] Read more.

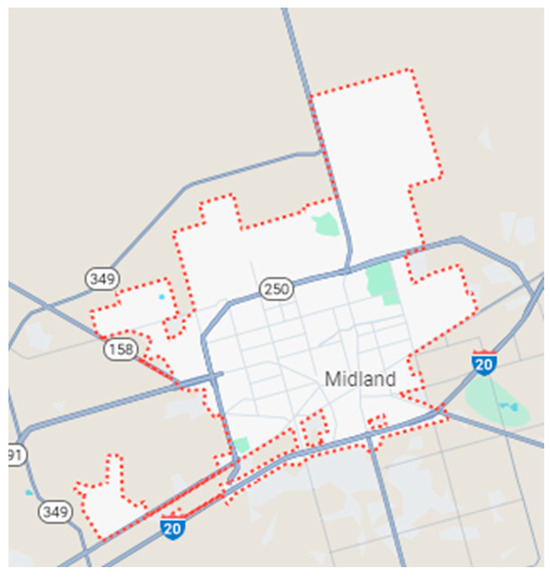

Accurate prediction of renewable energy output is essential for integrating sustainable energy sources into the grid, facilitating a transition towards a more resilient energy infrastructure. Novel applications of machine learning and artificial intelligence are being leveraged to enhance forecasting methodologies, enabling more accurate predictions and optimized decision-making capabilities. Integrating these novel paradigms improves forecasting accuracy, fostering a more efficient and reliable energy grid. These advancements allow better demand management, optimize resource allocation, and improve robustness to potential disruptions. The data collected from solar intensity and wind speed is often recorded through sensor-equipped instruments, which may encounter intermittent or permanent faults. Hence, this paper proposes a novel Fourier network regression model to process solar irradiance and wind speed data. The proposed approach enables accurate prediction of the underlying smooth components, facilitating effective reconstruction of missing data and enhancing the overall forecasting performance. The present study focuses on Midland, Texas, as a case study to assess direct normal irradiance (DNI), diffuse horizontal irradiance (DHI), and wind speed. Remarkably, the model exhibits a correlation of 1 with a minimal RMSE (root mean square error) of 0.0007555. This study leverages Fourier analysis for renewable energy applications, with the aim of establishing a methodology that can be applied to a novel geographic context.

Full article

Figure 1

Journal Menu

► ▼ Journal Menu-

- BDCC Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Topical Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

AI, Algorithms, BDCC, Future Internet, Informatics, Information, Languages, Publications

AI Chatbots: Threat or Opportunity?

Topic Editors: Antony Bryant, Roberto Montemanni, Min Chen, Paolo Bellavista, Kenji Suzuki, Jeanine Treffers-DallerDeadline: 30 April 2024

Topic in

Algorithms, BDCC, BioMedInformatics, Information, Mathematics

Machine Learning Empowered Drug Screen

Topic Editors: Teng Zhou, Jiaqi Wang, Youyi SongDeadline: 31 August 2024

Topic in

BDCC, Entropy, Information, MCA, Mathematics

New Advances in Granular Computing and Data Mining

Topic Editors: Xibei Yang, Bin Xie, Pingxin Wang, Hengrong JuDeadline: 30 October 2024

Topic in

Electronics, Applied Sciences, BDCC, Mathematics, Chips

Theory and Applications of High Performance Computing

Topic Editors: Pavel Lyakhov, Maxim DeryabinDeadline: 30 November 2024

Conferences

Special Issues

Special Issue in

BDCC

Machine Learning for Dependable Edge Computing Systems and Services

Guest Editors: Renyu Yang, Zhenyu Wen, Xu Wang, Prosanta Gope, Bin ShiDeadline: 30 April 2024

Special Issue in

BDCC

Multimedia Systems for Multimedia Big Data

Guest Editors: Michael Alexander Riegler, Pål HalvorsenDeadline: 31 May 2024

Special Issue in

BDCC

Predictive Performance-Explainability Duality for Big Data Analytics-Powered Healthcare

Guest Editors: Luca Parisi, Mansour Youseffi, Renfei MaDeadline: 21 June 2024

Special Issue in

BDCC

Machine Learning in Data Mining for Knowledge Discovery

Guest Editors: Cong Gao, Chuntao DingDeadline: 30 June 2024

Topical Collections

Topical Collection in

BDCC

Machine Learning and Artificial Intelligence for Health Applications on Social Networks

Collection Editor: Carmela Comito